We’ve all been lied to about UGC.

We’re told you need real creators, paying $800 a pop to get conversion.

But that campaign you just saw rip across the feeds? It isn’t real.

UGC creators can be a headache. But most AI UGC looks fake.

The moment your brain sees "perfect," it scrolls. The real secret isn't the AI. It’s mining exact phrases of human frustration from Reddit, then forcing the AI into an "anti-polish" doctrine.

That best-performing video wasn't born in a UGC campaign.

I built this OpenClaw skill to mine 1-star reviews and generate this viral, realistic content in under an hour.

Get it free, open-source, below (scroll to the bottom).

Sponsored

How Marketers Are Scaling With AI in 2026

61% of marketers say this is the biggest marketing shift in decades.

Get the data and trends shaping growth in 2026 with this groundbreaking state of marketing report.

Inside you’ll discover:

Results from over 1,500 marketers centered around results, goals and priorities in the age of AI

Stand out content and growth trends in a world full of noise

How to scale with AI without losing humanity

Where to invest for the best return in 2026

Download your 2026 state of marketing report today.

Get Your Report

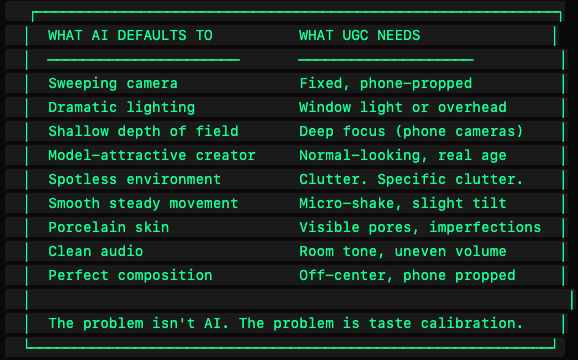

Why AI UGC fails (and it's not what you think)

Most people trying to make AI UGC hit the same wall.

They prompt Sora or Kling. They get something. The person is gorgeous. The lighting is perfect.

And nobody believes it for a second.

The problem is that it looks produced. And produced is the enemy of UGC.

UGC works for one reason: it looks like a real person pulled out their phone and yapped. That's it. The moment it looks like anything else (an ad, a brand video, a product commercial) your brain sees it as marketing and moves on.

I call this the anti-polish doctrine:

Every decision in this pipeline routes through one question: could a real person have shot this on their phone?

If the answer is no, fix it. If you can't tell, that's a pass.

The moment I knew the script was the problem

The second failure is more subtle than the visuals. And it costs you more.

In January, I ran a UGC test for a DTC client with four UGC vids.

We used the same creator, it was for the same product, and they had the same vibe. And one of the videos outperformed the others by 3x conversions.

The winning script had one thing the others didn't: we stole a line directly from a 1-star Amazon review.

"I was doing everything right and my body wasn't cooperating."

No copywriter would probably write that. It sounds too defeated. But someone who had actually experienced that, maybe wrote it on Amazon at 1am, that person felt it. Viscerally.

That's the key.

Specific language helps viewers imagine the result. And generic language doesn’t. The first converts. The second doesn't.

This is why this skill starts with persona research. Sure, you can pull competitor reviews on Amazon, TrustPilot or Reddit. Or, better yet, ask your Openclaw to do it. You aren’t pulling information. You want to pull exact phrases.

You're looking for:

"I was doing everything right and my body wasn't cooperating" → goes into a script

"I finally feel like myself again" → useless, discard

"I wore a dress I hadn't put on since my daughter was born and cried in the fitting room" → this is what you want

What I built

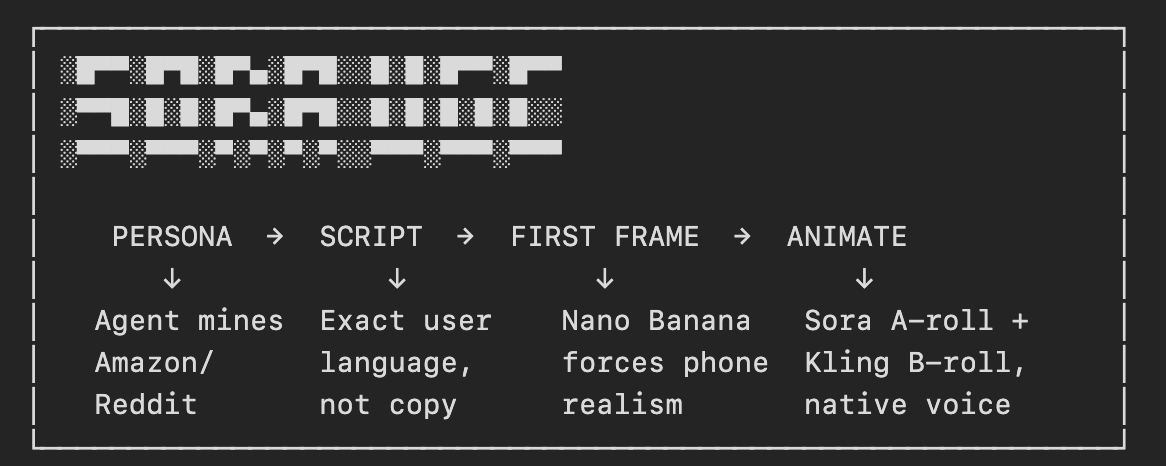

The sora-ugc skill is an OpenClaw pipeline that takes a brand brief and outputs finished UGC-style video campaigns.

Let me walk you through each piece.

The first-frame gate

Before we get to video, let's talk about the snap decision that happens the first 3 seconds.

Your viewer's brain decides to stay-or-scroll even before they are conscious of why.

By the time they've read your hook (if you’re that lucky), that decision is already made. Frame 1 is a visual design problem.

This is why the pipeline generates frame 1 separately, with Nano Banana 2, before animating anything with Sora.

Here’s the prompt that makes it work:

Raw iPhone 15 Pro photo. Candid moment, unfiltered, authentic.

f/1.8 aperture, shallow depth of field, slight digital sensor grain.

REALISM RULES:

- Natural imperfections: visible skin texture and pores, flyaway hairs

- Under-eye area shows natural shadows, not concealed

- Environment has real clutter, not styled

- Lighting is practical (window, lamp, overhead) - never ring light

- Expression is candid mid-moment, not posed for camera

- Off-center framing, phone propped, not professional

Negative prompt: CGI, perfect skin, beauty filter, studio lighting,

ring light, retouched, airbrushed, stock photo, model poseThis block goes at the top of every frame 1 prompt. Before the color JSON. Before the scene description.

The specific AI ‘tells’ this fights:

Skin smoothing. AI removes pores. Counter it: "visible skin texture and pores."

Symmetrical faces. AI makes faces too symmetrical. Counter it: "slight facial asymmetry."

Grey color cast. Nano Banana defaults to high contrast images. Fix it with JSON color extraction from a reference image that matches your environment.

Clean environments. AI backgrounds are spotless. Counter it with specific clutter items by name: coffee mug, phone charger, hair tie. Not "messy." Specific objects.

Perfect composition. AI centers and balances. Counter it: "off-center, phone propped on stack of books, not professional."

You might make 2-4 frame attempts before one passes. But that iteration is worth it, the first frame locks the visual world for everything that comes next.

Two engines, one video

Most people pick one AI video model and deal with its limits. But this pipeline uses two:

Sora 2 handles A-roll: the talking head. This is where Sora 2's lip sync is genuinely good. It makes confessional, casual, real-person energy. Frame 1 into Sora i2v, structured motion prompt, download immediately.

A few months ago you could not put people into Sora first frame, but now you can.

Kling 3 handles B-roll: everything that isn't the creator talking to camera. Product close-ups. Product in use. Establishing shots. Before/after scenes. You get it.

The same character reference image feeds both engines.

Same face in the Sora talking head. Same face in the Kling B-roll. Consistent creator across every clip in the video.

I use Kling for speed and the Element system. You can use (@Element1 for face, @Element2 for product) to lock both a specific character AND a specific product into every B-roll shot.

And the script gets marked before you generate anything:

[A-ROLL: Sora - talking head, car, hook]

"so I'm sitting in my car right now… and I know that sounds

weird but like… this is the first time in three years I'm

leaving at a normal hour."

[B-ROLL: Kling - back office, billing screen]

(Show: cluttered back office, laptop open with billing dashboard,

@Element1 hunched over keyboard, late at night, overhead

fluorescent light.)

[A-ROLL: Sora - talking head, car, close]

"anyway. I'm going home. that's - yeah. that's the whole thing."

A-roll drives the timeline. B-roll swaps visuals only. Then the audio pipeline brings it all home.

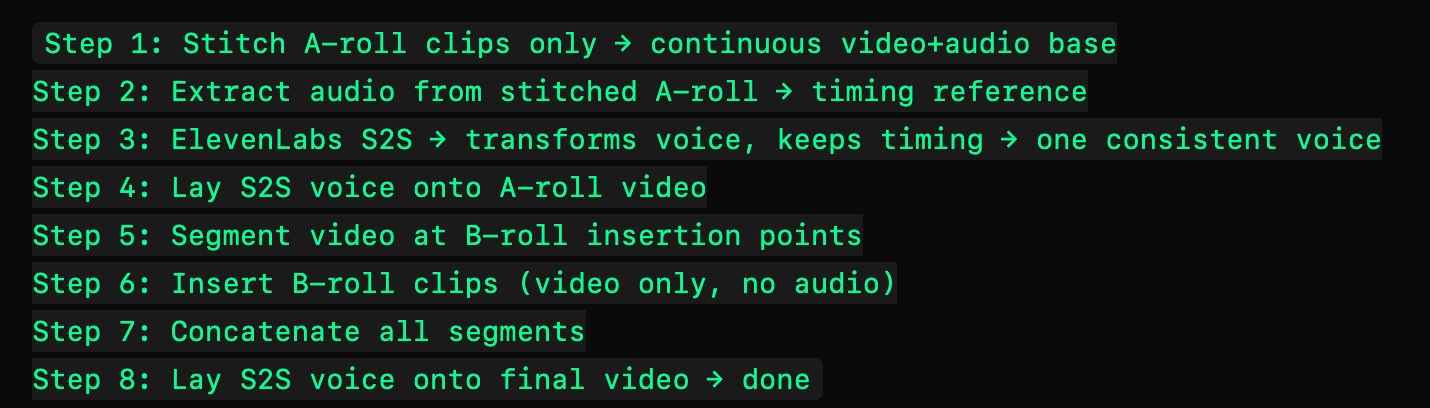

The voice consistency problem (and the fix)

Here's what breaks multi-clip UGC:

Every Sora clip has its own voice. But those voices can vary wildly between clips. Stitch three clips together and it sounds like James McAvoy in Glass.

Sora does not let you create characters that look like humans, and I don’t know about you, but most of my videos need humans in them.

The solution is ElevenLabs speech-to-speech, and the order you run it matters:

A-roll drives the timeline. If you stitch A+B first then try to sync audio, the timing breaks because B-roll adds visual duration that doesn't exist in the voice track.

Do NOT use text-to-speech for this step. TTS won't match the lip sync timing the clips already have. S2S transforms the voice character while preserving the exact original timing. Lip sync still matches, down to 0.01 seconds

The six formats

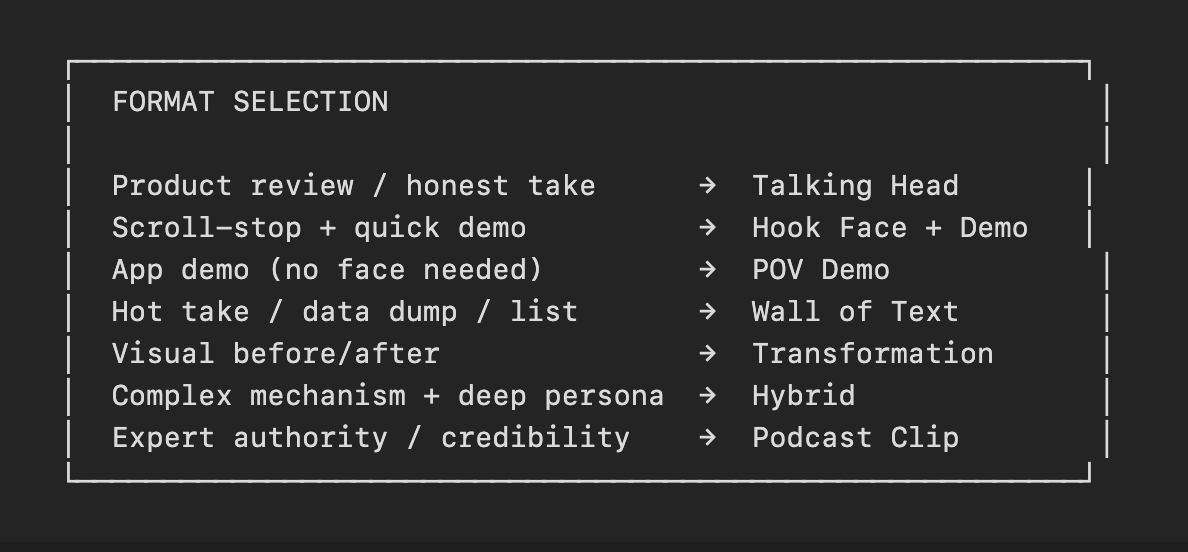

One skill. Six ways to use it. Each format exists because it produces a distinct behavior shift the others can't.

1. Talking Head Yapper - The workhorse. One person, one selfie angle, honest opinion. Phone propped, natural light, specific environment (bedroom, car, bathroom counter). 15-25 seconds, 2-3 Sora segments. Where most campaigns should start.

2. Hook Face + Demo - Classic format that actually works in high-volume UGC right now. Zero dialogue in the hook. Just a face with an emotion (shocked, frustrated, crying, happy, confused, angry, six emotion categories with specific Sora prompts for each) and text overlay. 2-5 seconds. Hard cut to the demo. The hook face catches attention at the visual processing level before the conscious brain engages. The text gives the brain a reason to stay. The demo delivers the content.

3. Wall of Text - Overwhelmingly long on-screen text that creates a curiosity trap. 4-5 seconds of dense text - more than can be read in the clip duration. The viewer's brain starts reading and needs to finish (so they watch on a loop). This beats the scroll reflex by hijacking the completion impulse instead.

4. Visual Transformation Story - Named concept hook ("the divorce effect"), 3-5 image segments animated with Sora. The NAME is the scroll-stopper. "The divorce effect" stops the scroll. "How divorce changed me" doesn't.

5. Hybrid Transformation - This is the format that crossed $100k in a recent campaign. Talking head bookends with a voiceover slideshow mechanism bridge in the middle. The talking head creates identification. The slideshow maintains attention through the mechanism section where pure talking-head retention drops. The talking head close delivers the transformation moment with eye contact. For anything where "why it works" is necessary for conversion.

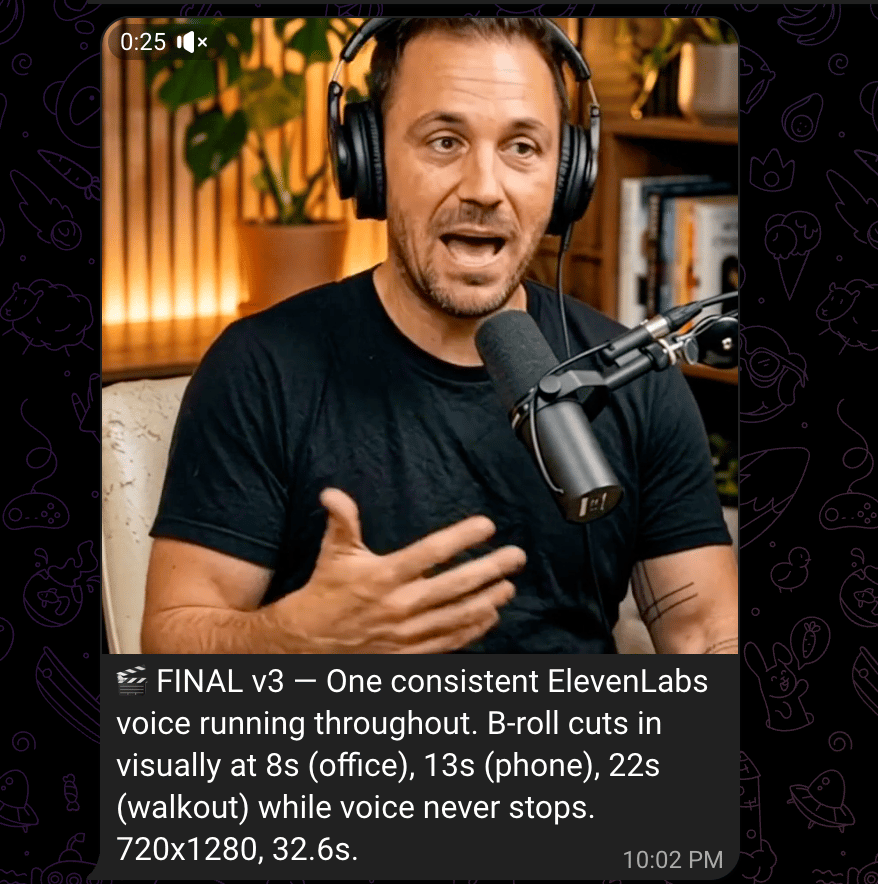

6. Podcast Clip - Fake podcast. Shure SM7B aesthetic, over-ear headphones, warm amber lighting, wood slat backdrop. Creator as "guest," mid-conversation. Viewers perceive podcast guests as credible experts, not advertisers. Authority from context, not claims. One note on prompting this: describe how the audio sounds, not what gear produced it. "Clean, natural podcast audio, close and present with subtle room tone" works. "Sounds like a Shure SM7B" does not.

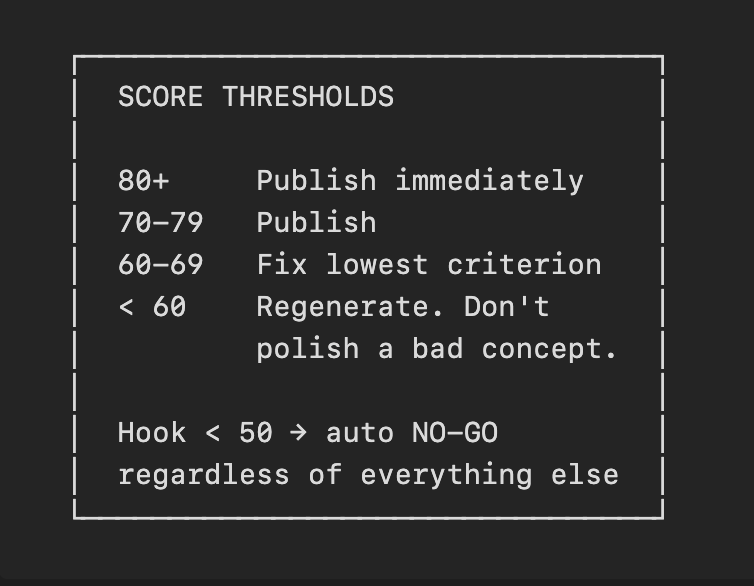

One update that I wish I had on the first round: mandatory virality scoring before anything gets published.

Seven criteria, each scored 0-100:

Criterion | Weight |

|---|---|

Hook strength | 20% |

Scroll-stop power | 20% |

Completion likelihood | 20% |

Pacing flow | 15% |

Emotional impact | 15% |

Text readability | 10% |

Shareability | 0% (tracked, not weighted) |

Hard gate: if hook strength < 50, automatic NO-GO. Doesn't matter what the other scores are.

Nothing else matters if they scroll past in the first two seconds.

The scoring uses a vision model (Gemini Flash, Claude) to evaluate either the full video or five evenly-spaced frames. It returns a weighted score, a GO/NO-GO, and, importantly, one specific, implementable fix with estimated score improvement.

Getting started

Download the skill from GitHub, run the dependency checker (it verifies all your API connections before you touch a prompt), and install. The whole setup takes 10 minutes.

What you need:

fal.ai key — Sora 2 for A-roll, Kling 3 for B-roll

OR Replicate key — Nano Banana for first frame generation

OpenRouter key — Gemini for virality scoring and video analysis

ElevenLabs key — optional, only needed for multi-clip formats where voice consistency across segments matters

OpenClaw installed

The workflow:

Step 1: Persona research. Tell your agent to mine Amazon reviews, Trustpilot, Reddit for your product category. It comes back with exact phrases, not paraphrases. You can also do this manually in about 60 minutes. Either way, this step is the whole thing.

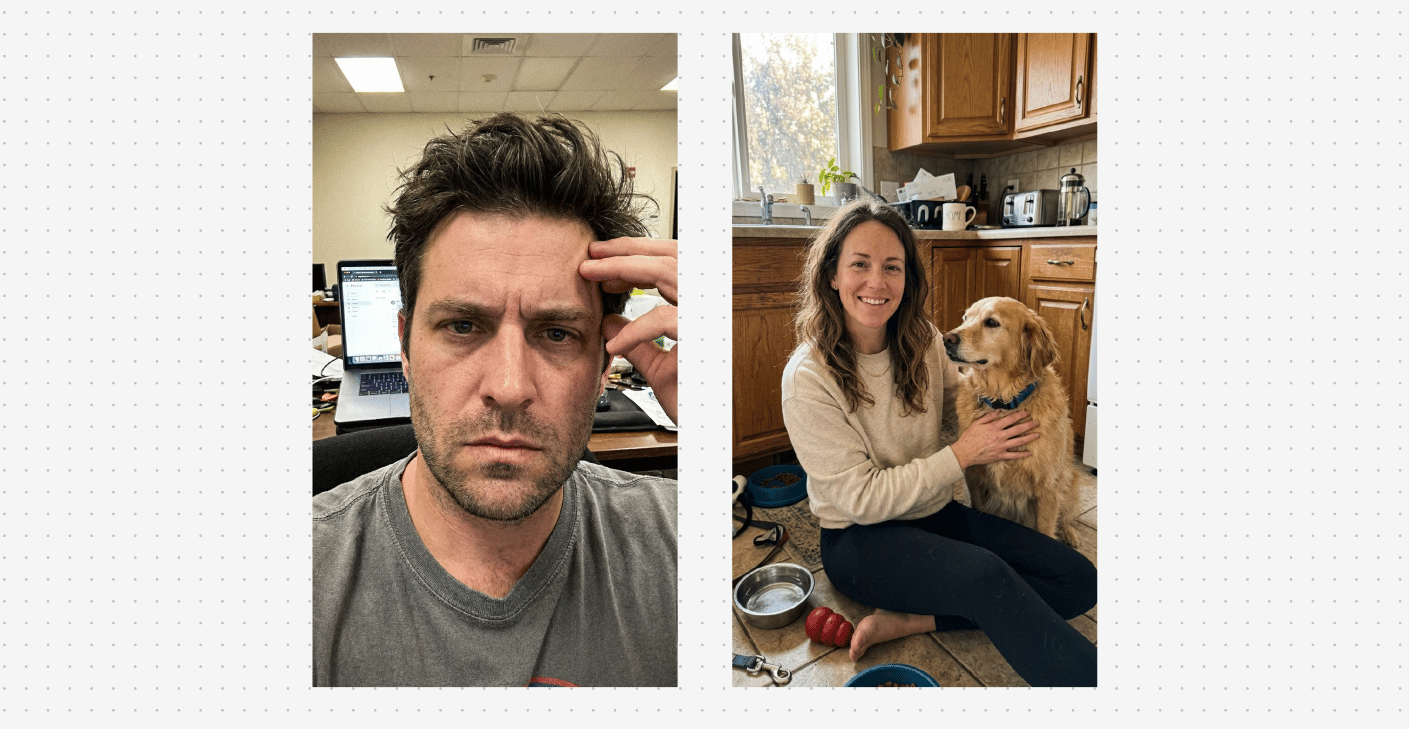

Step 2: Create your creator profile.

Tell the skill: "Create a creator profile for Coach Dan. mid-40s, male, slightly weathered look, wearing a polo. Gym environment." One profile per creator. Locked identity, same face, same build, same distinguishing features across every clip, every campaign.

Step 3: Pick your format. Read references/format-library.md. Let the persona research inform the choice. Map your script to the shot breakdown BEFORE writing a word. Every segment should be accounted for.

Step 4: Script it.

Use exact phrases from your persona mining. Test every line. Remember if it sounds like a brand wrote it, it's wrong. Read it out loud. If you'd never say it to a friend, rewrite it.

Step 5: Generate frame 1.

Tell the skill your scene, your creator profile, and your environment. The photorealism pre-prompt fires automatically. You just describe the moment. "Coach Dan in his office, late afternoon light, phone propped on desk, looking directly at camera with a tired-but-relieved expression." Generate context-aware frames per setting. Iterate here more than anywhere else.

Step 6: Animate A-roll.

Point the skill at your first frame and give it the motion prompt. Describe the micro-movements you want. "Minimal movement. Slight natural breathing. Small shoulder shift at the 4-second mark. Phone-propped stillness." The skill sends it to Sora via fal.ai and downloads the clip.

Step 7: B-roll via Kling.

The skill automatically pulls an environment frame from your A-roll and hands it to Kling as the starting image. Then you give Kling the B-roll description: "Cluttered back office, laptop open with a billing dashboard, overhead fluorescent light, late at night." Same visual world. Same floor. Same lighting.

Step 8: Audio pipeline (multi-clip).

Tell the skill to run the audio pipeline on your campaign folder. It stitches the A-roll, runs ElevenLabs S2S for voice unification, inserts B-roll, normalizes resolution, and assembles the final video. For single talking head clips: skip this step entirely, Sora's audio is already right.

Step 9: Post-production.

Apply color grade, grain (4K upscale trick), frame rate lock. If mixing Sora + Kling clips, grade Kling to match Sora's color world — they produce different color profiles by default.

Step 10: Captions — ALWAYS LAST.

Post-production first, captions last. Grain and color grade degrade clean caption pills if you apply them in the wrong order.

Tell the skill your caption lines and it auto-detects your video resolution and builds the overlay. Keep text in the green zone (TikTok/Reels block the top 210px, the right 120px, and the bottom 440px). Platform UI lives there. The skill knows where the safe zone is.

Step 11: Virality score before you publish.

Hook < 50 = don't post. Fix the hook and regenerate.

Step 12: Batch campaigns.

Tell the skill to run your full creator × format matrix: "Run the gym client campaign with all three creators across talking head, hook-demo, and hybrid transformation formats." One brief. Multiple creators. Multiple formats. The skill queues and runs the whole batch.

Who this is for

You're running paid social and testing UGC angles and want speed

You manage DTC or app brands that need high-volume creative

You run an agency and want to productize video creative that doesn't look like AI slop

You have a brand with a real customer journey

Not for you if you want one-click UGC with no research or ‘taste’. The pipeline makes production fast. The insight and taste makes it convert.

Get the skill

Full system free on GitHub:

Works across DTC, B2B SaaS, and consumer apps. Different categories, different personas, same flow.

No upsell. MIT license. Fork it.

Show me what you’re scaling. I read every reply.

Go big,

Matt

If this newsletter lit a fire under you, forward it to one person who would benefit.

🎁 Get rewarded for sharing! My team grew NEW accounts to over 50 million views in just a few months. We made an AI viral hook generator so you can follow the same hook frameworks that we do.

Invite your friends to join, and when they sign up, you’ll unlock our AI Viral Hook Generator—the ultimate tool for creating scroll-stopping content.

{{rp_personalized_text}}

Copy and paste this link: {{rp_refer_url}}